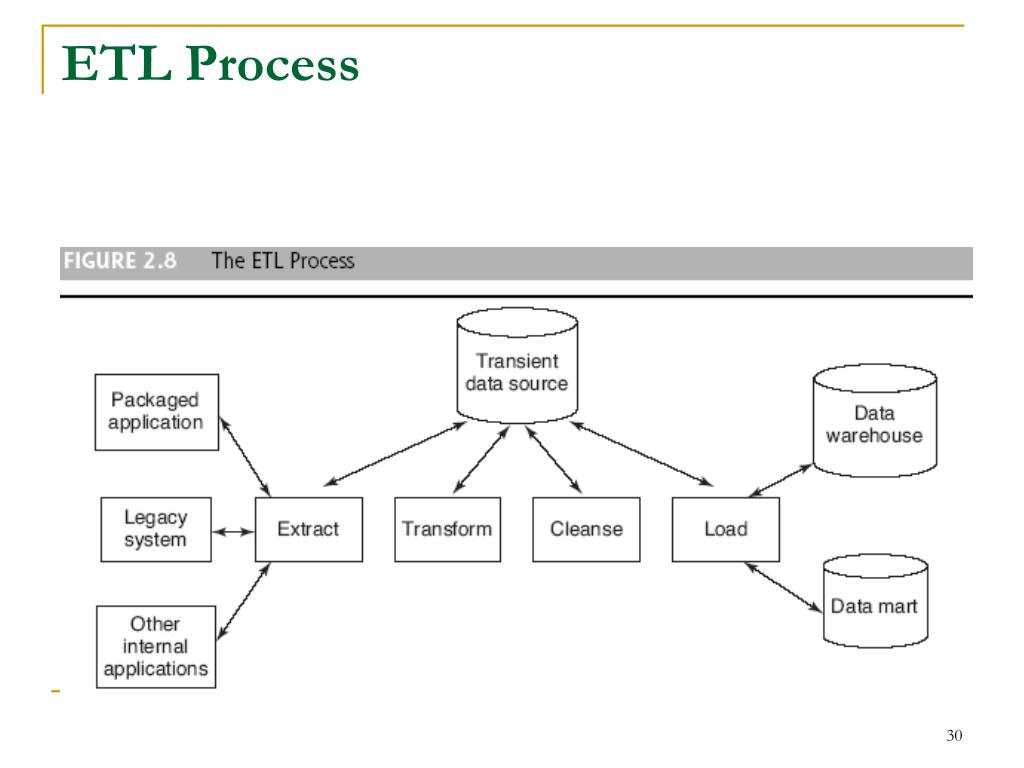

The transformed data can also be used as input for machine learning workflows or other data science uses.Ī major problem with the traditional ETL process is that analytics database summaries can’t support the type of analysis that teams need, so the whole process needs to run again, with different transformations.Īnalytics powerhouses like Google BigQuery, Snowflake, and Amazon Redshift have changed most organizations' approach to ETL. This is where business intelligence teams can run queries on that data and present the results to end users or individuals responsible for making business decisions. The transformed data is then loaded into an analytics database. This data transformation involves both cleansing and optimizing data for analysis. In a traditional ETL process, data is extracted from online databases, CRM systems, or on-premise data stores, which often have a high throughput and large numbers of read and write requests.Īs this source data is not suitable for data analysis or business intelligence (BI) tasks, it is transformed into a staging area. data warehouse ETL process Traditional ETL Our data transfer process is so simple that anyone can complete it in a few clicks. In both cases, Whatagraph saves the time needed to load data from multiple sources to Google BigQuery. Marketing agencies that handle hundreds of accounts, each with their own data stacks.Whatagraph is specially designed two types of users in mind – both of who need a fast and reliable way to load data from various sources into a central repository: Luckily, now there are many ETL tools that automate and simplify the ETL process. Historically, the ETL was a painstaking and time-consuming process that bound whole teams of tech. It also ensures that the data is in the format required for data analytics and reporting. The process is essential for data warehousing because it guarantees that the data in the data warehouse is accurate, complete, and up-to-date. The ETL process is repeated as you add new data to the warehouse.

Load: This stage involves loading the transformed data into the data warehouse by creating physical data structures.This stage includes data cleansing and validating the data, converting data types, combining data from multiple sources, and creating new data fields. Transform: In this stage, the extracted data is transformed into a format that is suitable for loading into a data warehouse.This step includes reading data from the source systems and storing it in a staging area. Extract: The first stage is to extract data from different sources, such as transactional systems, spreadsheets, and flat files.The process has three stages - extract, transform, and load. The ETL process is a process where you extract data from various data sources, transform it into a format suitable for loading into a data warehouse, and then load it.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed